nobody knows where AI is going, but it's going

how to manage fear, uncertainty and FOMO

In 2014 I attended a lecture on Cryptography that highlighted how Bitcoin works. I didn’t buy any.

In 2018 I brought down a production system with 400k active users. On Friday 5pm. And the senior engineers had already left the office.

What do these two stories have to do with the current AI race?

I’ll tell you.

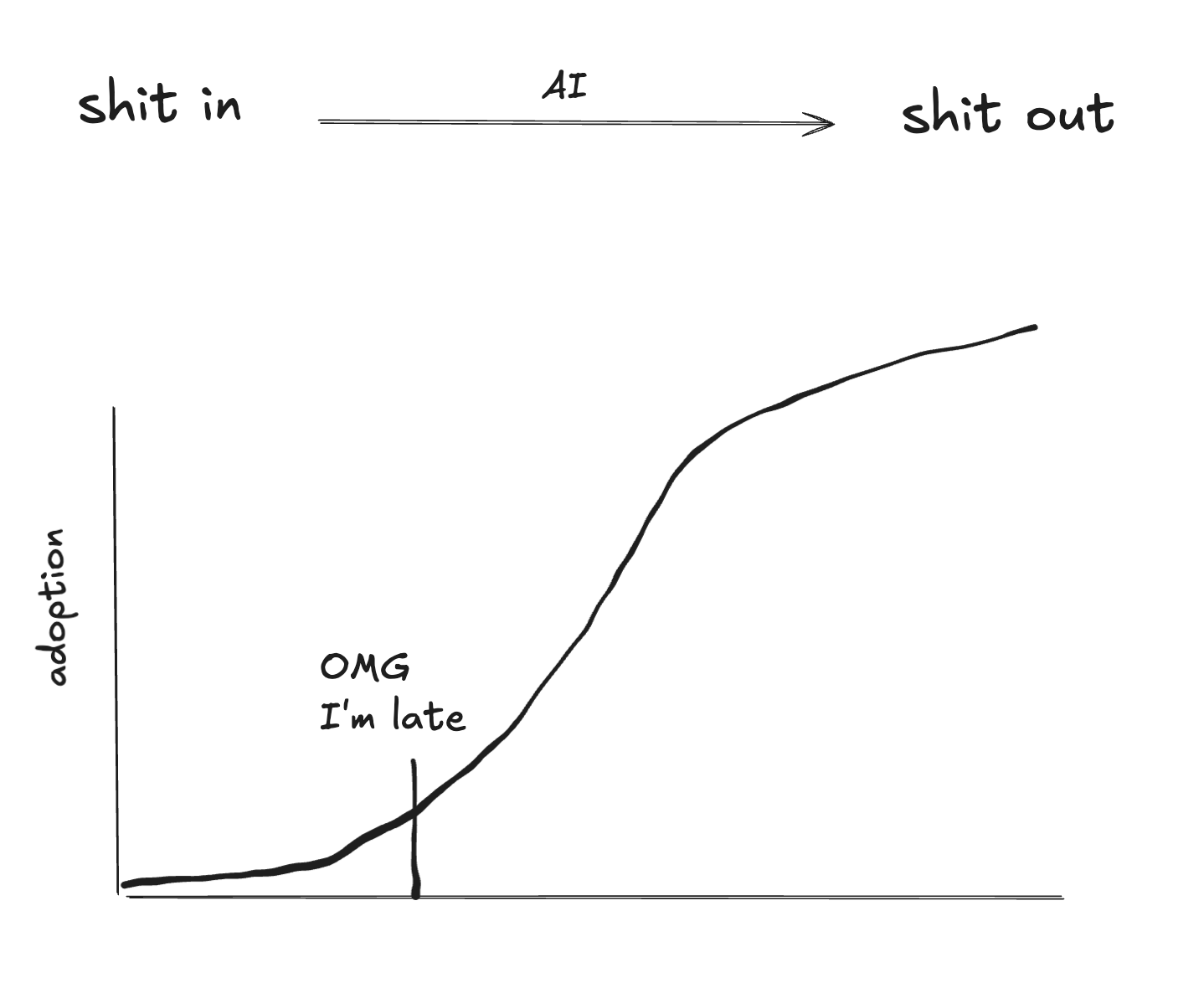

(a) we are still early, very early and (b) shit in shit out.

Let me expand on these failures of mine. But first, the summary for those with a short attention span.

I’ve spent the last year and a half on the cutting edge of AI. I built on top of harnesses, used early coding agents, worked on memory systems and set up long running agents with complex tooling.

There is much I have learned about AI as an emerging technology but even more about human nature.

So to the first point: we are still so very early.

90% of the stuff you read online is pure FOMO. People postulating they have all these crazy AI setups that do crazy stuff. And telling you you are falling behind.

10% of people have actually unlocked incredible things with AI. It’s worth finding them and seeing what they do.

The second point: shit in shit out.

Yes, you can generate more code, more text. But just like I thought to push another release on Friday afternoon in 2018, just because you can push out more shit doesn’t mean you should. I was a pretty shit programmer back then. And it broke the platform that thousands of people wanted to use.

There is a legitimate change happening in how we write code, design, write marketing copy, etc. — but do not forget in the end this has to work for your business and for your customers. And the AI does not feel pain about bringing down your system or insulting your clients. They don’t get fired.

I am far from an AI hater. I have changed how I work, I’ve built an AI-first startup. But it is my (and all our) job to look critically at technology and figure out how to use it to our advantage. And that requires a quiet morning, a cup of coffee and some actual thinking.

In 2014 I technically understood Bitcoin. But I did not understand Bitcoin. Do you know what I mean?

It took me years and deliberate thinking time to understand the value the technology could provide.

But all I was focused on for a while was thinking how late I was. To the party. To the price appreciation, to the technology trend of digital money.

When I actually understood Bitcoin in 2019 I was certain I was the last one in the world to do so. And as history tells us, I wasn’t the last one. Not by a long shot.

LLMs are barely a couple of years old, the ones that can perform more complex tasks at least. And while we should rush to understand how to apply these tools, there is also benefit in slowing the F down.

Workflows, systems and code will evolve. But if you know what an LLM is, and maybe know what a tool call is, or how to set up an MCP... then you are definitely not early.

Anthropic released Opus 4.7 yesterday and updated their Claude app. Within 1h of use internally we encountered 3 pretty bad bugs with the software.

Pretty annoying ones at that. A longer running job (that took up a lot of tokens) just randomly stopped, for example. We paid for that inference and Claude just crashed and we had to start from scratch.

Now, they can afford that, because they are selling something literally every knowledge worker wants to buy right now. They are selling intelligence.

But still, with all their talented people they ship more and more buggy software. I’m not the only one recognizing this. We have a quality problem.

And this gets us to the core issue. AI is trained on the average. And it doesn’t get fired.

The average creative writing, the average codebase. And if it ships a buggy feature or brings down a codebase, it’s not going to get fired.

I was horrified when I brought down that platform with 400k users on it. I stayed in the office until it was fixed. I troubleshooted with a junior colleague that was still around. I wrote a post mortem and had to discuss it with the senior engineers.

Most importantly I learned from my mistakes. Turns out humans are really good at doing that.

So to summarize:

- you are not late. we are early.

- AI can help you create a lot of average quality code/words/designs.

- there is real opportunity in being early to a technology trend. But no need to stress and FOMO.

- in many places I (and probably you) am below average. AI’s average output is way better than what I could do. And it can do it 24/7.

- so quality and learning from mistakes remains something that humans (you!) should focus on. And offload the rest.